An Overview

AI Guided Telemedicine is an AI supported telemedicine platform designed to reduceanxiety, prevent mistakes, and guide older adults step by step through remote healthcare.This project began with a simple observation: many telemedicine platforms worktechnically well, but they do not feel emotionally safe. I wanted to explore how AI couldfunction not just as a medical tool, but as a layer of reassurance that supports usersthroughout the entire experience.

Context

This was an academic UX project where I worked independently as the sole designer. I ledthe process from early research and empathy interviews to wireframes, usability testing,and high-fidelity prototypes. The focus was not just on improving usability, but onunderstanding how fear and uncertainty affect digital healthcare experiences, especiallyfor older adults.

Challenge

Through empathy interviews, I discovered that the biggest barrier to telemedicine was nottechnology itself, but fear of making mistakes. Users were anxious about pressing thewrong button, accidentally ending a call, or not knowing what would happen next. Evensmall interface errors reduced their confidence significantly. The challenge becamedesigning a system that feels clear, forgiving, and supportive rather than overwhelming.

ResearchI

conducted semi-structured interviews with older adults who have experience usingremote healthcare platforms. Instead of asking only functional questions like “Was the appeasy to use?”, I focused on emotional reactions. I asked questions such as, “What part ofthe process makes you feel nervous?”, “Have you ever hesitated before clicking abutton?”, and “What worries you most during a video appointment?” I also asked them towalk me through their last telemedicine experience step by step, so I could understandwhere confusion or anxiety appeared.

During these conversations, patterns quickly emerged. Many users paused before takingaction because they were unsure of the consequences. They often asked themselves, “If Iclick this, will it end the call?” or “What happens next?” This hesitation showed thatuncertainty, not complexity, was the main issue. Users repeatedly mentioned that theywanted clear, step by step guidance and reassurance that nothing irreversible wouldhappen.

In addition to interviews, I reviewed several existing telemedicine interfaces and analyzedtheir structure. I noticed that many platforms prioritize speed and efficiency, assumingusers want to move quickly. However, very few systems address emotional safety orprovide thoughtful recovery mechanisms. Error prevention and reassurance were oftentreated as secondary details rather than core design elements.

This research phase helped me reframe the problem. The issue was not about simplifyingtechnology further, but about designing for confidence and reducing fear.

Key Insights

Across interviews, the strongest insight was that confusion creates anxiety faster thantechnical difficulty. Users wanted to understand what the system was doing and why. Theypreferred transparency over automation. They also valued recovery mechanisms, becauseknowing they could undo mistakes made them more willing to engage with the system.

Design Goals

The main goal was to build an AI guided telemedicine experience that prioritizes clarity,reassurance, and mistake prevention. I wanted the AI to function as the primary interactionlayer, guiding users from symptom input to diagnosis and specialist booking. Accessibilityneeded to feel supportive rather than isolating, and recovery needed to be emotionallycalming.

Synthesize & Ideation

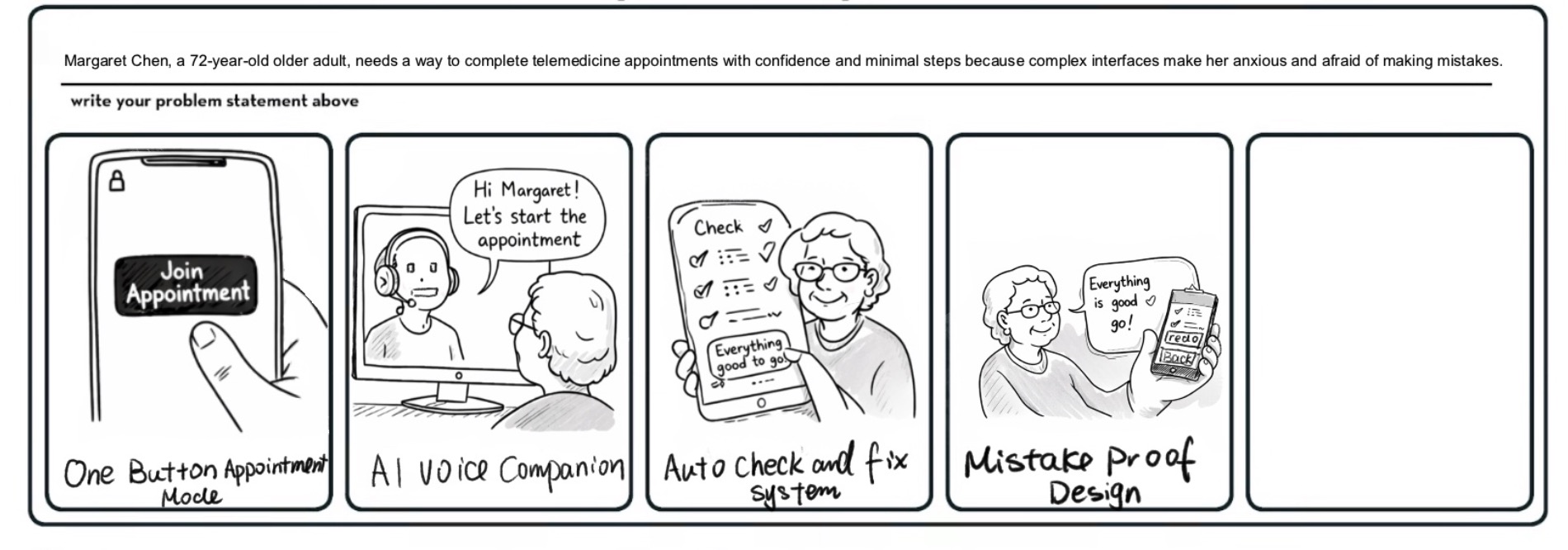

After organizing the research, I began exploring multiple directions. I consideredsimplifying the appointment flow, introducing a voice companion, and building anautomatic technical check system. These ideas focused mainly on efficiency and reducingfriction. However, when I revisited the interview transcripts, one theme became impossibleto ignore: fear of making mistakes.

Users were not asking for faster systems. They were asking for reassurance. They wantedto feel safe pressing buttons. They wanted to know what would happen next. Thatrealization shifted the direction of the project. Instead of designing for speed, I decided todesign for confidence.

This is where two core concepts emerged: AI guidance and Senior Mode.

AI would become more than a chatbot. It would act as a step-by-step guide throughoutthe experience, reducing uncertainty and explaining what is happening at each stage. Atthe same time, I introduced Senior Mode as an optional accessibility feature. Rather thanredesigning the entire interface, Senior Mode keeps the structure familiar while enhancingreadability and clarity. It increases text size, strengthens contrast, enlarges icons, andactivates automatic voice guidance. The intention was not to simplify the product intosomething different, but to reduce cognitive load without removing autonomy.

Together, these ideas reframed the product as an AI-supported environment designed toprevent anxiety before it starts.

How & Design

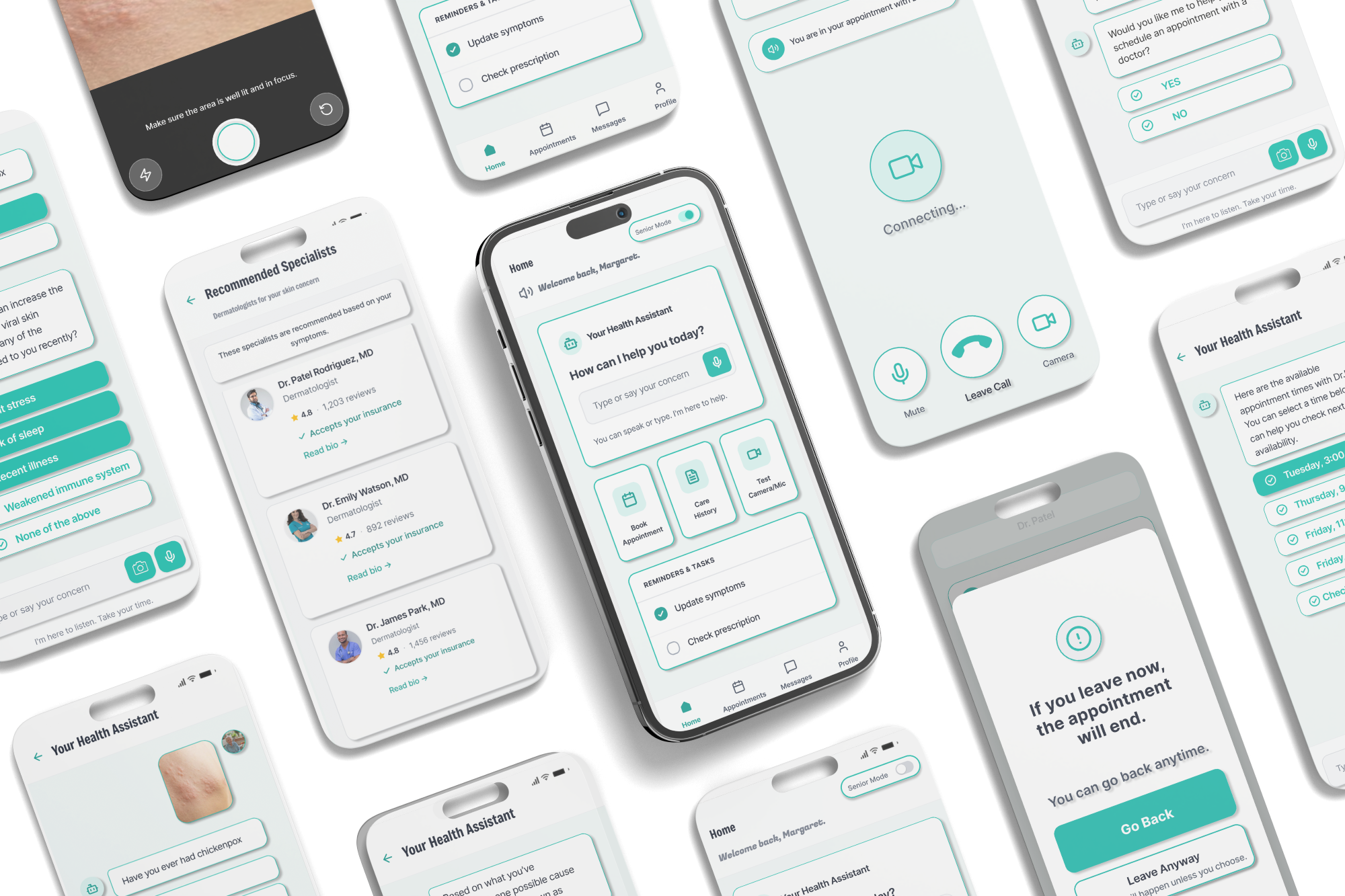

The final structure places AI at the center of the experience. Users begin by describingtheir symptoms in natural language, either through typing or voice input. I intentionallyavoided preset options in the beginning to make the interaction feel open and nonjudgmental. For skin-related issues, the system allows optional photo uploads to improvediagnostic accuracy.

Instead of jumping directly to a conclusion, the AI explains how it interprets theinformation before presenting a possible diagnosis. It might summarize symptoms, connectthem to potential causes, and clarify uncertainty. This transparency builds trust, especiallysince usability testing showed that users felt uncomfortable when diagnoses appeared toosuddenly.

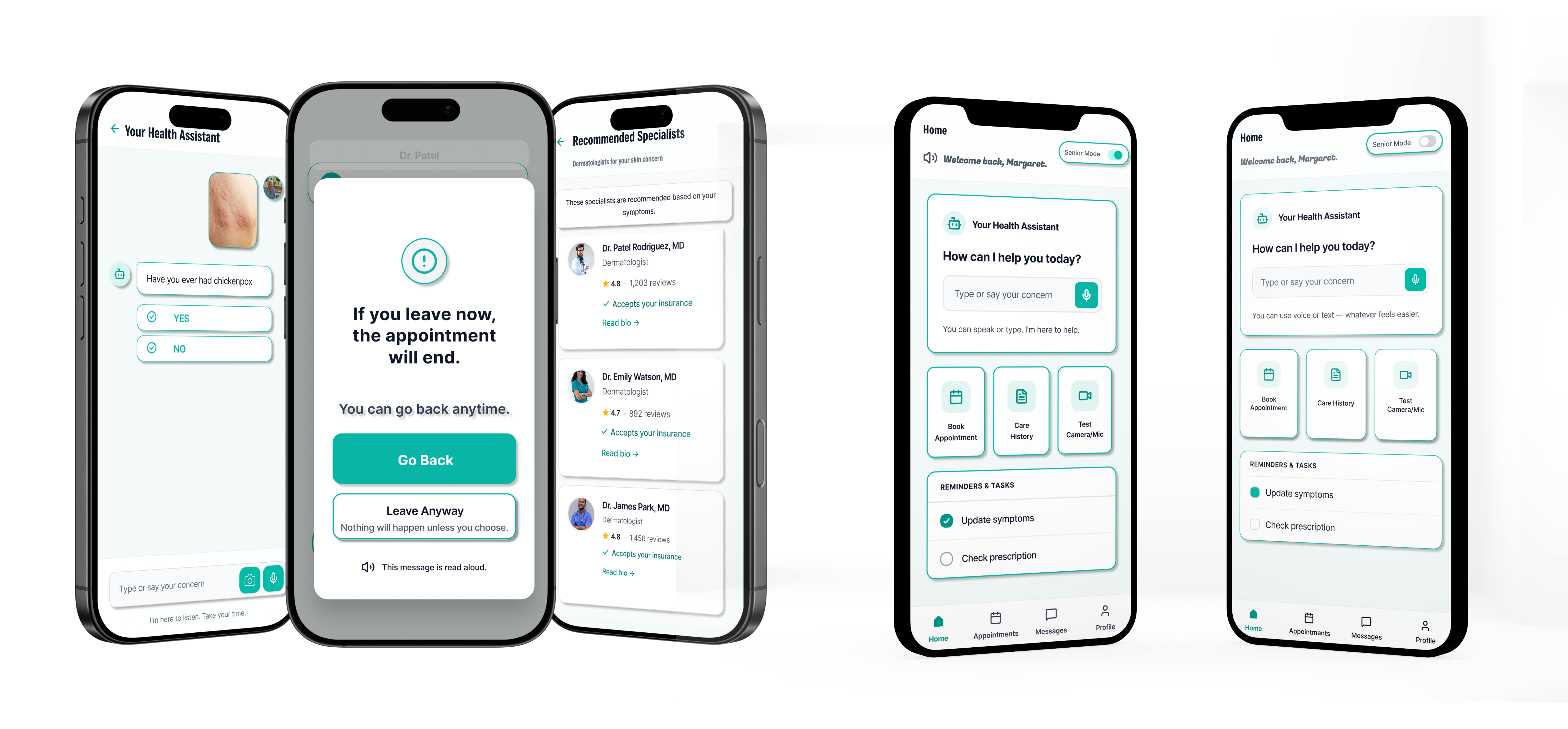

Senior Mode operates seamlessly within the same structure. When activated, the layoutdoes not change dramatically, which preserves familiarity. However, typography becomeslarger, contrast increases, spacing becomes more relaxed, and icons are easier to tap. Inaddition, the AI automatically reads key sections aloud, helping users understand whateach module does without relying only on visual scanning. This reduces cognitive strainand supports older adults who may feel overwhelmed by dense information.

Mistake prevention is integrated into critical moments, particularly during videoconsultations. If a user accidentally taps “Leave,” the system does not immediately endthe session. Instead, it pauses and presents a clear confirmation message using calmlanguage. The user can safely return with one tap. Even if they exit, a recovery flow guidesthem back into the appointment without blame or urgency. These interactions may seemsubtle, but they directly respond to the fear patterns uncovered in research.

The overall design balances guidance, transparency, and recovery, transformingtelemedicine from a task-based tool into a supportive experience.

Usability Testing

To test the structure, I used the Wizard of Oz method and acted as the AI behind thescenes. I asked users to complete tasks like turning on Senior Mode, describing symptomsin the AI chat, uploading a photo, choosing a doctor, and recovering after accidentallyexiting the flow. I carefully observed where users hesitated or felt unsure.

Users understood the overall concept, but several clarity issues appeared. The SeniorMode button was easy to miss, so I increased its visibility, improved contrast, and addedclearer explanation in the high-fidelity version. On the home screen, the AI chat was notclearly the main entry point. I reorganized the layout so the AI Health Assistant becamemore central and visually dominant.

Inside the chat, users were unsure what they could ask. I added a short introduction toclarify that the AI can help with symptoms, appointments, and doctor booking. Users alsofelt the diagnosis came too quickly. To fix this, I added a reasoning step where the AIexplains how it interprets the symptoms before presenting a possible condition.

For skin issues, users wanted more than text descriptions, so I introduced an optionalphoto upload feature. I also strengthened the recovery flow with clearer language andfeedback, and refined the typography to make the interface feel more professional andtrustworthy.

Overall, the high-fidelity design focused on improving hierarchy, transparency,reassurance, and visual consistency.

Final Solution

The final design presents an AI guided telemedicine platform that focuses on clarity,reassurance, and confidence. The AI becomes the first point of interaction. Users candescribe their symptoms freely using text or voice, upload a photo when needed, and seehow the AI is reasoning before receiving a possible diagnosis. This transparency helpsreduce the feeling that the system is making decisions too quickly.

After presenting a likely condition, the AI provides practical next steps, including daily caresuggestions and over the counter recommendations. If professional care is necessary, theAI guides the user directly into booking a specialist. The experience is continuous, so usersnever feel abandoned between diagnosis and action.

Senior Mode enhances accessibility by increasing text size, improving contrast, enlargingicons, and activating automatic voice guidance. The layout remains familiar, which helpsusers feel supported without feeling like they are using a completely different system.

Mistake prevention and recovery are built into critical moments, especially during videoconsultations. Instead of allowing irreversible errors, the system pauses, explainsconsequences, and offers safe recovery paths. The goal is not just functional safety, butemotional reassurance.

Impact

Through multiple iterations during my internship at Innovation AI, the project evolved froma basic telemedicine flow into a more structured, AI-first healthcare experience. Thehierarchy became clearer, the AI’s role became more transparent, and the overall interfacebecame visually more consistent. Usability testing showed how small details, such asconfirmation language, button visibility, and explanation timing, can strongly influence userconfidence and trust.

As part of Innovation AI’s broader exploration of AI-driven healthcare solutions, thisproject demonstrates how AI can reshape telemedicine by prioritizing emotional safetyalongside efficiency. Instead of simply offering remote medical access, the platformfocuses on creating a guided, supportive, and confidence-building experience for users.

Reflection & Next Steps

This project changed how I think about AI in healthcare. I realized that intelligence alone isnot enough. AI must explain itself, slow down at the right moments, and support usersemotionally as well as technically.

If I were to continue developing this project, I would conduct broader testing with olderadults to measure confidence levels and task completion time more precisely. I would alsoexplore ethical considerations around AI diagnosis transparency and risk communication.

Ultimately, this project reinforced the idea that good healthcare technology should reducefear, not create it. Designing for confidence may be just as important as designing forefficiency.